Our History

Hiperwall started as a research project

at the University of California, Irvine in 2004

Hiperwall began as a research project funded by the National Science Foundation at the University of California, Irvine in 2004. The goal was to build the world’s highest resolution (at the time) tiled display wall, more commonly known as a video wall.

It was built to enable scientific visualization on an unprecedented scale, and we regularly showed imagery and data sets that measured hundreds of millions of pixels and gigabytes of data.

The first Hiperwall system was a pretty impressive collection of hardware for 2005, with 50 processors (more were added later), 50 GB of RAM, more than 10 TB of storage (we got a gift of 5TB worth of drives for our RAID system from Western Digital), and 50 of the nicest monitors available, but it was the software that really made it special. We named the project HIPerWall, which is an acronym for Highly Interactive Parallelized Display Wall. We took that interactivity seriously, so we didn’t just want to be able to show a 400 million pixel image of a rat brain, but we wanted to allow users to pan and zoom the image to visually explore the content. This user interactivity set the HIPerWall software apart from the other tiled display software available at the time and is still a major advantage over competing systems.

Sung-Jin Kim, author of the original software, in front of the first HIPerWall system

From the start, we designed the system to be highly interactive and flexible, and we used parallel and distributed computing techniques to provide the high-performance visualization required to drive a 200-million-pixel display system.

Where interactivity becomes essential is when a crisis arises or new information from a new source needs to be displayed urgently. Because our software is designed for easy interactivity, a system operator can quickly place the new source or image anywhere on the wall and even replicate it to multiple walls. Because of the interactive design features built into Hiperwall software, the ability to manipulate content is ready when our customers need it.

Early Research

Biophysical Analysis

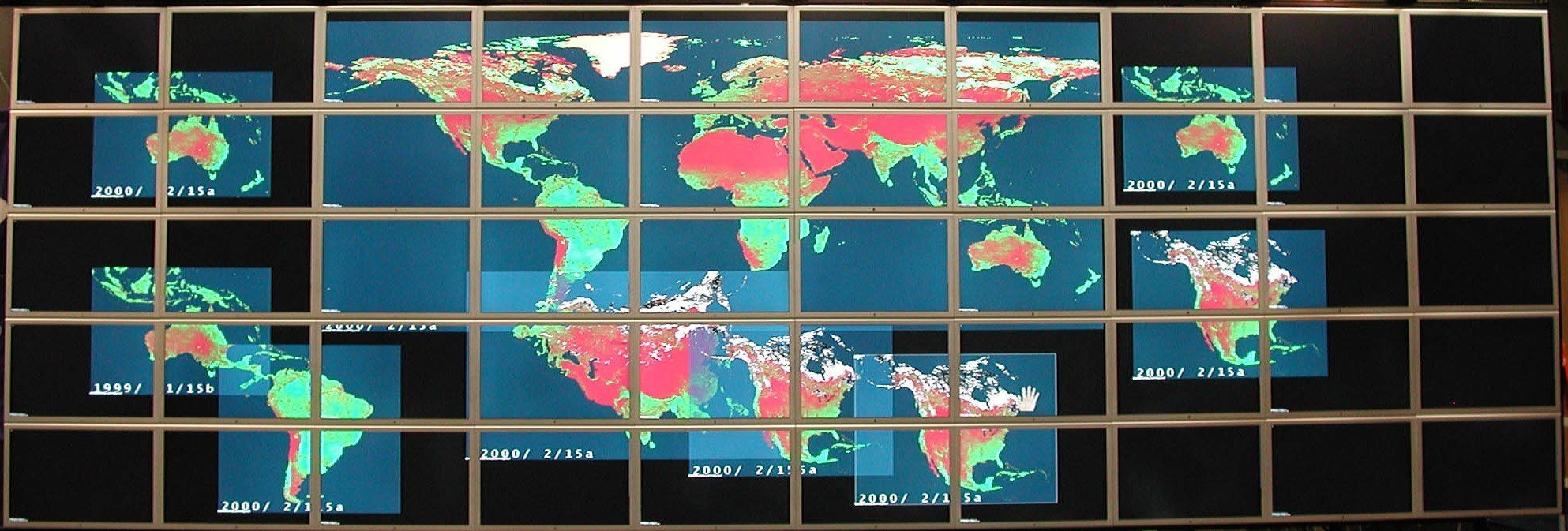

Dr. Sung-Jin Kim, who wrote the original software, turned to distributed visualization of other things, like large datasets and movies, also in a highly interactive manner. The dataset he tackled first was Normalized Difference Vegetation Indexdata, so the new software was initially named NDVIviewer. The software figured out exactly what needed to be rendered where and did so very rapidly. The NDVI data comprised sets of 3D blocks of data that represented vegetation measured over a particular area over time, so each layer was a different timestep. The software allowed the user to navigate forward and backward among these timesteps in order to animate the change in vegetation.

The NDVIviewer running on HIPerWall showing an NDVI dataset.

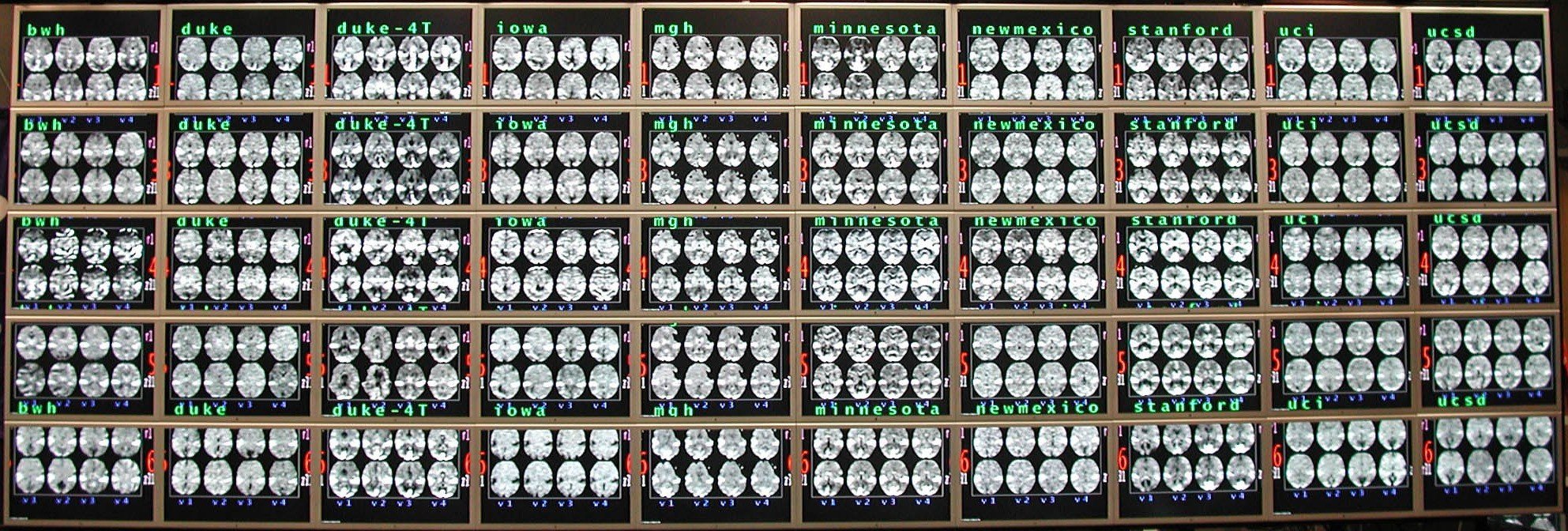

Medical Analysis

NDVIviewer was able to show an amazing set of functional MRI (fMRI) brain scans. This 800 MB data set held fMRI brain image slices for 5 test subjects who were imaged on 10 different fMRI systems around the country to see whether machines with different calibration or from different manufacturers yield significantly different images (they sure seem to do so), for a total of 50 sets of brain scans. NDVI viewer allowed each scan to be moved anywhere on the HIPerWall, and the user could step through an individual brain by varying the depth or through all simultaneously. In addition, the Cg shader image processing could be used to filter and highlight the images in real-time. Overall, this was an excellent use of the huge visualization space provided by HIPerWall.

Our Founders

Stephen Jenks, Ph.D. | Co-Founder / Chief Scientist

Stephen Jenks has developed parallel and distributed computer systems for 25 years. At Northrop Grumman, he developed advanced avionics and medical computer systems. He joined the Electrical Engineering and Computer Science faculty at the University of California, Irvine, where he taught and performed research in parallel and distributed computing and processor architecture. He was Co-Principal Investigator of the NSF-funded HIPerWall project, which developed the technology for the world's highest resolution tiled display. He co-founded Hiperwall, Inc. to commercialize the tiled display technology into a cost-effective software solution that uses commodity computing and display components. As Hiperwall's Chief Scientist, he continues to develop advanced technology to make the company's products even more powerful and easy to use. He earned a Bachelor of Science degree in Electrical and Computer Engineering from Carnegie Mellon and a Master of Science degree and Ph.D. in Computer Engineering from the University of Southern California.

Stephen Jenks has developed parallel and distributed computer systems for 25 years. At Northrop Grumman, he developed advanced avionics and medical computer systems. He joined the Electrical Engineering and Computer Science faculty at the University of California, Irvine, where he taught and performed research in parallel and distributed computing and processor architecture. He was Co-Principal Investigator of the NSF-funded HIPerWall project, which developed the technology for the world's highest resolution tiled display. He co-founded Hiperwall, Inc. to commercialize the tiled display technology into a cost-effective software solution that uses commodity computing and display components. As Hiperwall's Chief Scientist, he continues to develop advanced technology to make the company's products even more powerful and easy to use. He earned a Bachelor of Science degree in Electrical and Computer Engineering from Carnegie Mellon and a Master of Science degree and Ph.D. in Computer Engineering from the University of Southern California.

Sung-Jin Kim, Ph.D. | Co-Founder / Chief Technology Officer

Sung-Jin Kim has researched distributed computing and distributed visualization for 14 years. As part of his Ph.D. work at the University of California, Irvine, he developed early versions of software for HIPerWall, a National Science Foundation (NSF) funded project, which created the technology for the world's highest resolution tiled display. Upon completing his Ph.D., he joined the California Institute for Telecommunications and Information Technology (Calit2) at the University of California, Irvine as a post-doctoral researcher, where he continued his research on distributed visualization and improved the software for HIPerWall. He co-founded Hiperwall, Inc. to commercialize the tiled display technology into a cost-effective software solution that uses commodity computing and display components. As Hiperwall's Chief Technology Officer, he provides the overall vision, strategy and leadership for development of the company’s innovative products and solutions. He earned a Bachelor of Science degree in Mathematics from Korea University, a Master of Science degree in Computer Science from the University of Chicago and a Ph.D. in Computer Engineering from University of California, Irvine.

Sung-Jin Kim has researched distributed computing and distributed visualization for 14 years. As part of his Ph.D. work at the University of California, Irvine, he developed early versions of software for HIPerWall, a National Science Foundation (NSF) funded project, which created the technology for the world's highest resolution tiled display. Upon completing his Ph.D., he joined the California Institute for Telecommunications and Information Technology (Calit2) at the University of California, Irvine as a post-doctoral researcher, where he continued his research on distributed visualization and improved the software for HIPerWall. He co-founded Hiperwall, Inc. to commercialize the tiled display technology into a cost-effective software solution that uses commodity computing and display components. As Hiperwall's Chief Technology Officer, he provides the overall vision, strategy and leadership for development of the company’s innovative products and solutions. He earned a Bachelor of Science degree in Mathematics from Korea University, a Master of Science degree in Computer Science from the University of Chicago and a Ph.D. in Computer Engineering from University of California, Irvine.

What's in a Name?

Many people are confused by the spelling of the Hiperwall® name, often misspelling it “Hyperwall” or even “Hyper Wall." This is how our name evolved.

The goal of the research project led by Falko Kuester and Dr. Stephen Jenks, when they were UCI professors, was to develop technology to drive extremely high-resolution tiled-display walls (video walls). Their approach differed from that of other tiled-display systems in that they wanted their system to scale easily to huge sizes, so they needed to avoid the potential bottleneck of a centralized rendering system that most other video walls had. Therefore, they put powerful computers behind the displays. These display nodes perform all the rendering work for their display and had little interaction with other display nodes.

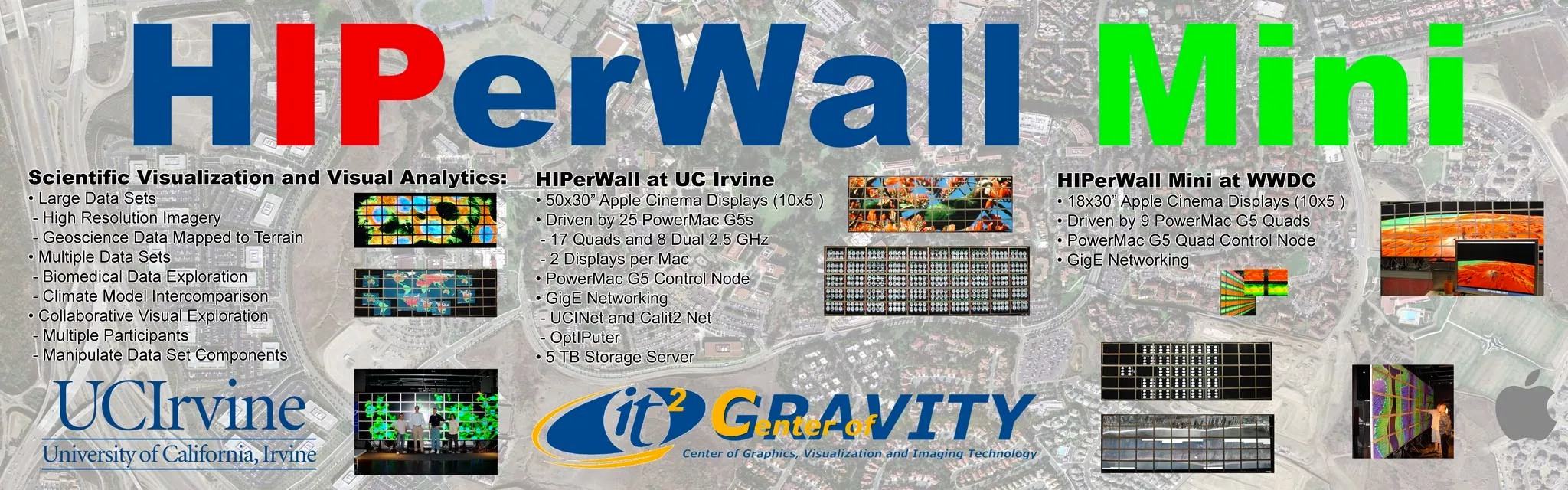

Because this was a very distributed and highly parallel computing approach, they called it the Highly Interactive Parallelized display Wall, or HIPerWall for short. The acronym is a little forced because they had to ignore the word “display,” but the idea is pretty clear. You can see the research project logo on the image below. The image promoted the HIPerWall Mini system they showed at Apple’s World Wide Developers Conference in 2006. At 72 million pixels screen resolution, the HIPerWall Mini was one of the highest resolution displays in the world at the time.

In the picture above, you’ll note that the “IP” in HIPerWall is highlighted in a different color. It was highlighted because they based their technology on the Internet Protocol (IP) rather than proprietary protocols or networks so they could interoperate and use standard, off-the-shelf equipment. This is one of the main reasons Hiperwall systems are so cost-competitive today -- you can use our advanced software on commercial-off-the-shelf (COTS) computers, displays, and networks to create a powerful video wall system without proprietary servers, amplifiers, and non-scalable bottlenecks.

Before Hiperwall became a commercial entity, the name was modified to Hiperwall. Hiperwall is a registered trademark owned by the University of California (UC Irvine, in particular) and exclusively licensed for commercial use by Hiperwall, Inc.

Hiperwall, Inc. is a leading company in video wall software and distributed visualization technology.

Contact Information

1.888.520.1760

23351 Madero, Suite 250

Mission Viejo, CA 92691

©2024 Hiperwall, Inc. All rights reserved